Epic Presentation-Fail Yields Real-World Prototyping Lessons for Government

Recently, I traveled to Florida with a co-worker to test some service prototypes with a government audience. Long story short, once we arrived, everything went wrong.

Recently, I traveled to Florida with a co-worker to test some service prototypes with a government audience. Long story short, once we arrived, everything went wrong.

This wasn’t my first rodeo and, as usual when presenting at someone else’s facility, we had prepared many backups for our technology setup. We had our materials on a hard drive. We them on the cloud. We had them on external media drives and we had emailed files to the our audience in advance. But for one reason or another, none of it worked.

Fortunately, we had printouts of a paper-based exercise with us, but even the electronic presentation meant to guide participants through that exercise didn’t work. The computer “game” was functioning, but instead of using it on a projector as intended, it could now only be played on a single laptop screen.

We only had three hours’ time with the group, we needed their feedback, and we’d already traveled six hours to get there. So we proceeded using only what we had. And you know what? It went surprisingly well.

Aside from the obvious embarrassment and frustration of falling prey to Murphy’s Law, the feedback we got from this catastrophic test was just as good—and possibly better—than what we were able to capture in previous tech-enabled tests. Here’s why (and a few of the prototyping lessons for government we learned):

My introduction was reduced to only the most important points

In government work, we tend to demonstrate our understanding of complex bureaucratic frameworks by caveating and referencing everything we say. As consultants, we also tend to spend lots of time reassuring clients that our recommendations come from demonstrable expertise and logic. Therefore, not having a carefully prepared set of slides in this context was daunting—but the format forced brevity, directness, and honesty with the audience. I had only one “slide”: the whiteboard in the room where I’d scribbled a few notes from memory of my PowerPoint presentation.

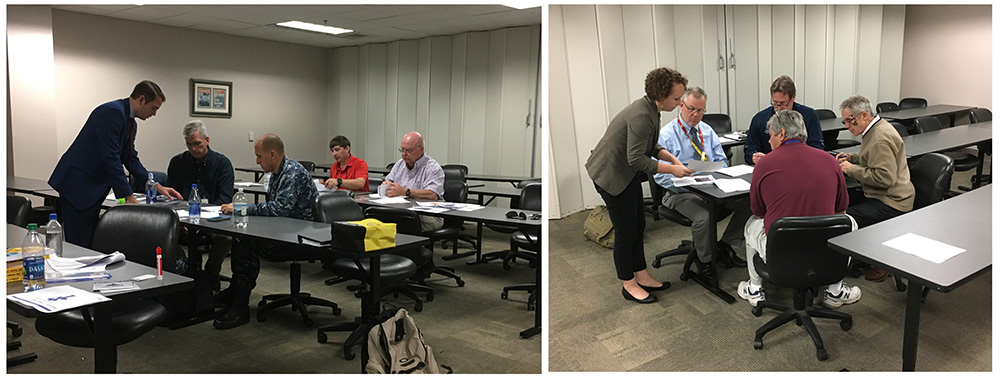

The result was that the preliminaries were over quickly and after few questions, we were on our way. People were moving around, asking questions, engaging immediately at the start of the event rather than 20 minutes in.

We learned something about the structure of the offering

Rather than having 15 people move through the exercises in order, we broke into small groups. Some of the participants gathered around the laptop for the “game” while others worked through the paper packets. The results of individual exercises were roughly comparable to results collected from tests done “in order.” As a result, I now understand that a series of exercises we had previously considered to be strictly linear might be rearranged (or possibly made iterative) without seriously impacting the outcome.

Participants’ deeper engagement revealed intrinsic priorities

The clarifications I had to give while we played in the new—unintended—format helped me understand which parts of the presentation really mattered most. The format highlighted what participants understood intuitively and what actually requires additional preparation. The thoughtfulness and level of detail participants put into the feedback demonstrated a much deeper engagement with the prototypes than previous tests.

It was clear what we didn’t yet understand about our own prototypes

The reason? All the answers and directions we gave participants were from memory. Watching our team explain the prototypes from memory gave me not only a list of things to improve about the prototype, but also a better understanding of what kinds of training we’ll need to do with staff to ensure everyone has the basic expertise required to facilitate in a situation like this.

Conclusion: Including these prototyping lessons for government in future events

Conclusion: Including these prototyping lessons for government in future events

While I love plans, and believe in the power of technology to support engagement, this “failure” of technology and planning was actually refreshing. My main takeaway from this experience was that rather than preparing presentations in the hopes that nothing breaks, sometimes the thing to do in an iterative design process really is to build the “break” in intentionally. This is a relatively common tactic in design thinking, but one that can still feel foreign and scary in the government consulting space.

I’m already brainstorming effective ways to intentionally get the same kinds of results we got from this “failure.” For others working in government, do you intentionally build in chaos when you test ideas? What works (or doesn’t work) for you? I’d love to hear your ideas.